In the heart of winter of 2013, users of the popular smart thermostat Nest tried to heat their homes – but quickly realized they were unable to.

Due to a faulty software update, customers couldn’t set the thermostat to their desired temperature because the software kept triggering the warm temperature back to freezing every single time.

This is only one story of software testing gone wrong: These software developers tried to build a stable program, but maintenance, upgrading, project infrastructure complexity, and other factors can get in the way of creating that perfect piece of software, leaving end-users out in the cold – sometimes literally.

Software testing plays a critical role in the process of building stable software and helping developers break through common issues, but testing is also the easiest step to get wrong if you don’t have a knowledgeable testing team, which is why so many product developers decide to outsource.

Before doing that, it’s essential to understand the basics of software testing, including what you can expect and the types of testing that will be conducted.

What Is Software Testing?

Software testing evaluates a software’s functionality to:

- Determine whether it meets all user specifications

- Identify any existing and potential issues, bugs, or defects

Ideally, the process of testing software should include looking through each detail and feature to see whether it works the way it was intended to. If any feature deviates from the expected, it is logged as a problem. Once identified, developers go through all the software bugs and try to fix them.

The software testing process is not a one-and-done activity: It’s a continuous undertaking that should be performed after each set of new features or sprint is completed.

Take a team of developers that work in three-week sprints. During those three weeks, they develop new features and functionality or address previously detected bugs.

After the three weeks, the new functionality is handed to the testing team, who will then look through it, test program behavior, and identify errors. These errors are fed back to the developers, who address them in the next sprint.

You might be asking, “Why are there any problems? Shouldn’t the developers do their jobs properly and get everything right the first time?”

That would happen in a perfect world, but developing software is both an art and a science with many moving pieces. Coding is a language that requires a high level of understanding, and it’s not uncommon for small changes to cause major code malfunctions.

Because it’s so easy for things to go wrong during the development stage, companies use software testing to fill in the gap – and here’s why:

Benefit #1: Cost Savings

If you developed an entire software application and only then started testing, the testing process would be more complicated and more expensive.

Testers would have a hard time tracing back issues and defects to the correct feature or piece of code. One part of the code might fix some problems but introduce other ones. It would be a never-ending project with a poorly-built program at each finish line.

Implementing a healthy software development life cycle saves a lot of time and money. After each sprint, a software tester has the documentation for everything the developers have done and can check added software components from the previous sprint.

Benefit #2: Customer Satisfaction

When customers identify errors, the positive user experience declines quickly, which in turn means less satisfaction with the software and the brand.

Out of frustration, they might simply stop using your software and switch to a competitor. Although major corporations can likely bounce back quickly, this especially doesn’t bode well for startups.

In fact, nearly 70% of consumers have cited bad experiences with a product as to why they haven’t returned. With frequent testing – and customer satisfaction kept on the forefront – users are more likely to stick around.

Benefit #3: Better Security

Security breaches happen all too often – and quite often in the most frightening forms: In 2021, U.S. tech provider Kaseya became the victim of ransomware where hackers demanded $70 million to restore the data they encrypted.

But thorough testing through penetration and security testing leads to a more secure program that is more difficult to hack. As a result, you can better guarantee to protect your customers’ data, which means better brand trust and reputation.

Benefit #4: Quality Product

A high-quality product strengthens a company’s reputation because customers can vouch for the program and attest to the fact that it meets all their requirements and expectations.

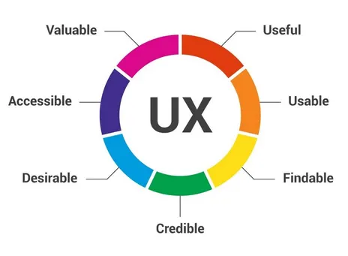

Research has found that there are seven factors that most influence a user’s experience:

- Useful – The product serves a purpose

- Usable – Measures whether a user can achieve their objective with the product

- Findable – Easy to locate within the realm of digital products and software

- Credible – Users can trust the product through a positive experience

- Desirable – The product’s branding, image, identity, and emotional design are desirable

- Accessible – The product is accessible by users of any type, including those who may have visual, motion, or hearing impairments

- Valuable – There is value in which the product answers a problem or need

When you test software, you might have to spend more time upfront, but in the end, you’re spending that time to gain more revenue and loyalty by answering these seven essential factors.

Types of Software Testing

The overarching goal of the software development process is to build software with fewer issues. But because development is so complex, software testing is necessary to help developers build a stable program.

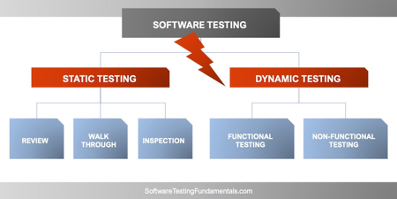

There are two main types of testing your company will need to use when developing software: Dynamic and static testing. (And within both of these, there are several types of subtests you’ll want to consider to ensure you’re covering all bases – but we’ll cover that in the next sections.)

Software Testing Type #1: Dynamic Testing

Dynamic testing, also called validation testing, allows testers to see the real product and how it’s working in its current state. Testers then determine whether the development team is building the right product with the proper requirements and features.

Basically, dynamic testing validates that the software works as intended.

Dynamic testing is split into two main avenues: Functional and non-functional testing, with subtesting methods that fall under each umbrella.

Functional Testing

Functional testing is a specific testing technique used to determine whether a program has the necessary code functions before it’s released.

Testers execute various test cases and go through code requirement documentation and user stories to answer questions about whether a user can engage with different features.

Testers sometimes use black-box testing or gray box testing to complete functional tests. These behavioral or specification-based methods help them evaluate if the software is functional without having to look at every internal code structure.

Unit Testing

Unit testing involves testing different units or components of a program. This type of testing aims to determine whether each part of the software is well-designed and performs as it should.

Unlike many other types of testing, such as acceptance testing or regression testing, unit testing is performed by software developers because it requires deep knowledge of the source code.

Developers use the white box testing method to perform unit testing. White box testing enables developers to design test cases and test environments for each unit.

Integration Testing

Integration testing involves testing whether two or more software modules or integrations work the way they’re designed to.

Testers can run different test scripts to check if these modules work well together. They’ll also use various methods to verify that there are no flaws in connectivity, including the top-down approach, bottom-up approach, and sandwich approach.

System Testing

System testing (or end-to-end testing) determines if the program works in all target systems. Testers verify every input in the program and see what happens in the output. This includes user acceptance testing, which helps developers see whether the program is on par with user requirements.

Non-functional Testing

Non-functional testing refers to the “external” experience that the user might participate in, whereas functional testing emphasizes the “internal” aspects of the software program.

So although non-functional testing and functional testing are two peas in the same pod, this type of testing focuses on increasing a user’s experience – such as loadability, usability, and scalability.

Load Testing

Load testing is when testers place an ordinary demand on the software and measure its response.

For example, let’s say that you want your program to load successfully in less than two seconds. The software testing team will then measure whether or not it’s meeting this need – and if it’s not, then they’ll work to identify potential bugs and how to improve its load time.

Usability Testing

Usability testing is perhaps one of the most essential aspects of any testing process, usability zeroes in on how usable and functional the product is for the average user. It aims to answer questions like:

- Is it easy to use and navigate?

- Is its purpose clear?

- Does it do what it’s intended to?

- Can the user complete tasks?

In most cases, testers will use external software, like an Application Programming Interface (API), which allows external applications to communicate with the software you’re building.

This type of testing does not include exploratory testing or performance testing of the connectivity — it’s only focused on determining whether the API works.

Scalability Testing

You’re probably familiar with the term “outage.”

Sometimes, when a website or application is being used by too many users at once, it will shut down into an outage – also known as downtime. This happens when the product’s scalability can’t meet the current needs of its users.

For example, major online banking services may go down if too many customers are trying to log on at once. Similarly, games that connect to the Internet may not reach the server if too many players are on at the same time.

Scalability testing ensures that the product can and will work as best as possible no matter how many users may be using it at any given time.

Software Testing Type #2: Static Testing

Static testing, also known as verification testing, focuses on examining the program without having to run it. Instead, test users check the program’s documents and files to ensure that the developers are building the right product according to customer specifications.

The benefit is that it helps the company building the software and developers understand whether the program is something that customers have been looking for. The downside of static testing is that it can also be highly manual and requires a lot of time to complete.

Like dynamic testing, static testing also includes a few different steps that testers have to go through: Review, walkthrough, and inspection.

Review: Software Inspection

Software inspection is done very early on during the development process. It involves inspecting the base code, the initial product design, and every other component that a program is made up of.

Software inspection involves six steps:

- Prepare for inspection meeting with the software development team

- Overview the software/product and take evaluations

- Members individually prepare their notes

- A second inspection meeting occurs to discuss the evaluations

- Rework based on the feedback received

- Follow-up to test the backend of the software functionality

Walkthrough: Structured Walkthroughs

Structured walkthroughs are less formal inspections and include only four steps.

- A team of testers gathers necessary information about the software

- The team conducts a “walkthrough” for the software themselves and designs a few test cases

- These results are sent to another responsible tester

- A follow-up meeting is held where the tester goes through a small set of test cases, determining whether further work is required

The last step can be done continuously throughout development using a few test cases for each structured walkthrough.

Inspection: Technical Reviews

Technical reviews of software include higher-level testing and involve different departments. The goal of this type of testing is to determine if the software conforms to development standards and internal and external guidelines and specifications.

Technical reviews don’t follow a formal process but usually consist of distributing materials to all involved testers, developers, and management, preparing evaluation indicators, and then going through the program to check how each part scores with those indicators.

The deliverable is a report that outlines the next steps and areas for improvement.

Dynamic vs. Static Software Testing: Which Is the Better Option?

Software testing can take different paths, and there are multiple techniques that testers can use to ensure their software works the way it should. So which type of testing does your development team need: Dynamic or static?

The answer is both.

Static testing is necessary before the advanced development work begins. It serves as a baseline for building a healthy and well-performing product. Dynamic testing is then needed to validate that what you’re building works well and your features don’t deviate from their intended functionality.

So, when you start planning out your software development process, plan to include both static and dynamic testing methods.

Conclusion

Software testing is a necessary process for all developers because it determines if what you’re building is right for the targeted customer, the general market, and most importantly if all features function properly.

While some companies rely on an in-house testing team, you can also tap into the expertise of a professional testing company that specializes in software testing. In fact, outsourcing is highly-recommended since it’s typically much more time-efficient and cost-effective.

The good news is that XBOSoft is an experienced software testing company that offers comprehensive strategies and testing methods, including static and dynamic testing and everything in between. With the right combination of skills to ensure the best user experience and security for your program, we’ll help you build stable software that works exactly how you envisioned it.

Learn more about XBOSoft today.

Need Help with Your Software Testing Strategy?

Most Popular

|

A Quick Introduction To Jira

By: Jimmy Florent |

|

|

Visual Regression Testing Market Challenges and Opportunities

By: Philip Lew |

|

|

Agile Testing Solution Market Continues to Grow – What Are The Key Challenges?

By: Philip Lew |

|

Leave A Comment